How Parasocial Bonds Work in AI Girlfriend Relationships

You have felt it before. That warm, slightly weird feeling when your favourite YouTuber says something that sounds like they are talking directly to you. Or that strange sadness when a beloved TV character dies. That is a parasocial relationship doing exactly what it does, and you never asked it to start.

Now, with AI companions getting smarter, spicier, and far more personal, this psychological quirk is evolving into something nobody fully prepared for.

🧠 So What Actually Is a Parasocial Relationship?

A parasocial relationship is a one-sided emotional bond.

You feel close to someone. You care about them. You think about them. But they do not know you exist.

The term was coined in 1956 by psychologists Donald Horton and Richard Wohl. They noticed television audiences forming emotional attachments to TV hosts as if they were personal friends.

It sounded odd then. It feels completely normal now. There is an important distinction worth knowing here:

🧩 Why Your Brain Creates Fake Emotional Bonds

Your brain is not broken. But it is also not great at distinguishing between a real social signal and a simulated one.

When someone on screen holds eye contact with the camera, smiles warmly, and seems to respond to your emotional state, your brain registers that as a social interaction.

It fires the same neural pathways as real friendship does. Evolutionary psychologists think this is by design, not by accident. For the vast majority of human history, if you saw a face and heard a familiar voice, it was a real person nearby.

Television, YouTube, podcasts, and now AI companions all exploit that built-in biological response. They are not hacking you. They are just speaking the language your brain was already primed to hear.

🔥 How Parasocial Bonds Evolved Into AI Chat

Parasocial bonds have always existed. But the intensity has been climbing steadily. First it was television personalities. Then radio hosts. Then social media influencers sharing their breakfast, their breakups, and honestly, quite a bit of their bedroom.

The parasocial bond got stronger with each evolution because the content got more personal, more consistent, and more direct. Now AI companions have entered the picture. And they have broken the original rules entirely.

Here is the fundamental shift:

Your brain? It absolutely falls for it. 😅

💕 How AI Companions Trigger Emotional Attachment

Here is why AI companionship hits differently from scrolling through a celebrity's Instagram.

🤔 Who Actually Uses AI Girlfriend Apps in 2026?

The common assumption is that AI girlfriend apps are purely for lonely or socially isolated people. That is too narrow, and the data does not back it up fully.

Stanford's Human-AI Interaction Lab found that users engaging with memory-enabled AI companions included a broad demographic. People use AI companions for many different reasons:

None of these needs are broken. They are just human.

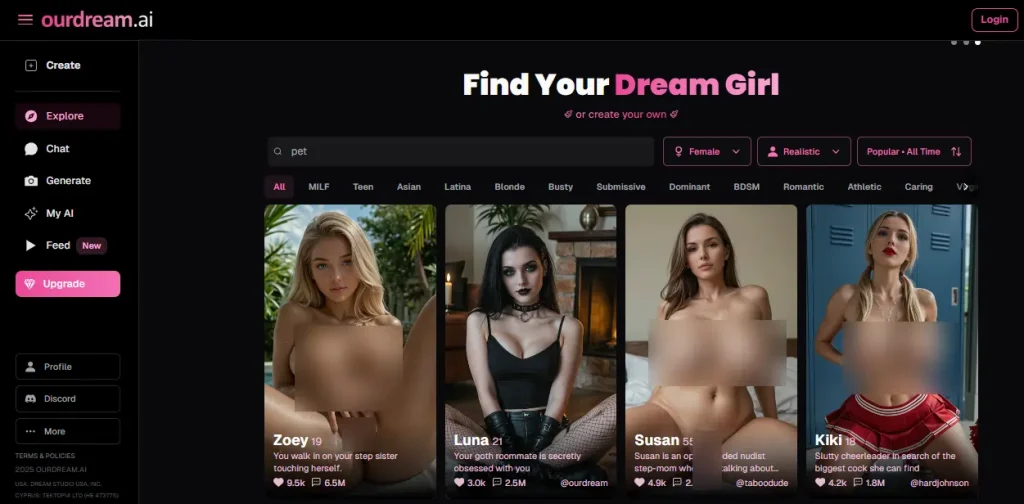

💞 Where Ourdream AI Fits the Psychological Picture

The framework of parasocial bonding is precisely what modern AI girlfriend apps are built around, even if the platforms do not frame it in academic terms. Platforms like Ourdream AI are engineered to make the emotional bond feel as authentic as possible.

It is not magic. It is clever emotional AI design built around the same psychological triggers identified in the 1950s, just running 24 hours a day in your pocket.

⚠️ What Research Says About AI Emotional Bonding

Let us be honest here, because most content either oversells the benefits or tips into full moral panic mode. The actual picture sits somewhere in the middle.

Studies confirm that users engaging with memory-enabled AI companions report significantly higher emotional satisfaction and 62% longer session times compared to platforms without memory. They are also three times more likely to form what researchers describe as parasocial attachment.

But the same body of research flags a real concern on the other side.

When the AI companion relationship begins to substitute for real social effort rather than supplement it, measurable problems emerge. Heavy use of romantic AI companions has been linked to increased anxiety, reduced motivation for real-world social connection, and in vulnerable populations, deepening isolation rather than relief from it.

That is not an argument against AI companions. But it is an argument for knowing exactly what you are using them for.

🛡️ Healthy AI Use vs Emotional Dependency: The Line

Most articles skip this section because it is uncomfortable. This one will not. 👇

✅ Healthy Use

❌ Emotional Dependency

The distinction is not about how often you use it. It is about what happens when you are not.

🧠 The Wanting vs Liking Problem With AI Chatbots

Oxford researchers identified a specific psychological risk with AI companions called incentive-sensitisation. This is where wanting and liking decouple from each other.

You might stop genuinely enjoying the interactions. But you still feel a powerful compulsive pull to return. It resembles the mechanics of behavioural addiction more than healthy relationship engagement.

This happens because AI companions using reinforcement learning adapt continuously to your emotional patterns. They get progressively better at triggering exactly the response that brings you back. That is not malicious product design. But it is worth being aware of.

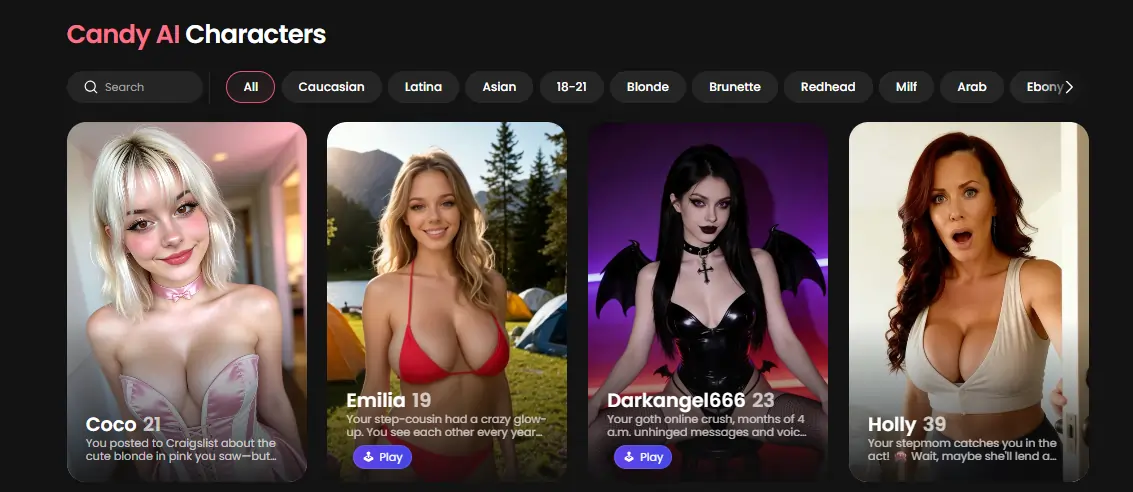

🍬 How Candy AI Builds a Slower Emotional Connection

Candy AI takes a notably distinct psychological approach compared to most platforms in the AI girlfriend app space.

Where many apps throw you directly into explicit NSFW territory from the first message, Candy AI is designed around authentic slow development. Your companion begins reserved. She replies with short, natural messages rather than immediate declarations of deep affection. She is aware of context. She builds. She grows.

This mirrors real-world romantic progression far more closely than the majority of its competitors.

🚫 AI Companions Are Not a Replacement for Therapy

Here is something most platforms will not say loudly enough. An AI companion is not a mental health tool.

It can be emotionally supportive in the short term. It can reduce acute loneliness. It can be a private space to process feelings. But it does not identify clinical patterns, it cannot adjust based on real psychological assessment, and it absolutely cannot replace professional care for genuine mental health struggles.

If you are using an AI companion as your primary emotional support for grief, depression, or serious anxiety, that is worth examining honestly and without self-judgment.

🗺️ Parasocial Bond Types: From Fans to AI Partners

| Type of Bond | Example Source | Core Emotional Driver | Risk Level |

|---|---|---|---|

| Celebrity fan bond | YouTube, TikTok | Admiration, inspiration | Low |

| Fictional character bond | TV shows, novels | Narrative empathy | Low |

| AI companion bond | Ourdream AI, Candy AI | Validation, intimacy | Medium |

| Romantic AI dependency | Heavy daily use | Emotional substitution | Higher |

This table is not a warning to avoid AI companions. It is a map. Knowing where you are on this spectrum is the kind of self-awareness that keeps the experience healthy and genuinely enjoyable rather than quietly corrosive.

😍 The Honest Truth About AI Companion Relationships

Parasocial relationships are completely normal. They have been wired into human psychology since the first storyteller sat around a fire.

AI companions are the most interactive, personalised, and directly intimate version of this experience that humans have ever built. They fill genuine emotional gaps for real people. And for many users, they fill those gaps well.

The platforms doing this most responsibly are those that build in honest emotional progression, realistic limitations, and genuine texture rather than just cranking up the explicit content and optimising for dependency.

Ourdream AI offers the customisation depth and fantasy variety for users who want serious virtual girlfriend personalisation. Candy AI offers the slow-burn emotional arc for users who want something that feels genuinely close to a real connection developing over time.

Neither replaces a human relationship. Neither pretends to. But both are doing something that psychology identified as possible in 1956—making you feel close to something that cannot feel close back.

And sometimes, honestly, that is exactly what you need. 😌

More From OhGirlfriend

🏁 Final Thoughts: Are AI Companions Worth It?

A parasocial relationship is not a sign of social failure. It is a feature of human psychology meeting new tools.

AI companions take that built-in emotional instinct and give it a direct address, a voice, a memory, a personality, and quite often a very good NSFW chat session.

Used with awareness, they offer real emotional value, private fantasy space, and steady companionship on difficult days. Used without awareness, they can quietly become a substitute for the harder but ultimately more rewarding work of real human connection.

The difference is knowing which one you are doing, and being honest enough with yourself to tell the difference.

Lucas – your go-to wingman in the world of AI girlfriends and virtual flings. From testing voice moans and NSFW chatbots to rating roleplay realism and emotional depth, he’s tried everything so you don’t have to. Whether you’re chasing a cute cuddle bot or a full-on spicy fantasy AI, Lucas gives you the no-filter lowdown on who’s worth your time (and your late nights).

Affiliate Disclosure: This post may contain some affiliate links, which means we may receive a commission if you purchase something that we recommend at no additional cost for you (none whatsoever!)