AI Voice Synthesis in AI Companion Apps: Full 2026 Guide

AI voice synthesis is the reason your talking AI girlfriend no longer sounds like a broken sat-nav from 2009. If you have spent any time with a modern AI voice chat companion, you already know the gap between a flat robotic voice and one that actually feels like a person on the other end is enormous. 🎙️

That gap has closed in a big way.

This article breaks down exactly how the technology works, what separates brilliant voice AI from terrible voice AI, and which apps are genuinely worth your time if realistic voice matters to you.

📞 Why AI Companion Voice Changes the Whole Experience

Text is fine. But text is also easy to dismiss as a bot.

The moment you hear a realistic AI voice respond to something personal you just said, your brain starts treating the interaction differently. Not because you are being tricked, but because human brains are wired to connect through audio.

Voice AI chatbot technology taps into that instinct directly.

A well-built AI companion voice call doesn't just read words aloud. It delivers them with the right pace, the right warmth, and the right emotional weight. That experience is what keeps millions of users coming back to apps like OurDream AI and GoLove AI every single day.

🧩 What Is Synthetic Voice Technology in AI Apps

Synthetic voice technology is the process of turning written text into spoken audio using machine learning. The old version of this was basic TTS. The kind that sounded like someone had trained a microwave to speak.

The new version is a completely different beast.

Modern voice synthesis NLP layers multiple systems on top of each other to produce speech that sounds genuinely human. Those layers include:

None of these systems work in isolation. The magic only happens when they all fire together.

A sentence like “I missed you so much today” needs to sound different from “I missed you, obviously.” One is tender. One is teasing. A well-built emotional voice AI reads the difference and delivers accordingly.

🧠 How AI Voice Models Are Trained to Sound Human

Here is the bit that most articles skip entirely.

AI voice models are not just trained on a lot of audio. They are trained on contextually labelled audio. This means the model doesn't just learn what words sound like. It learns what words sound like when someone is nervous, flirting, tired, or excited.

The training pipeline typically works like this:

The result is a model that can take a simple line of text and produce audio that feels appropriate for the moment. Not just readable. Actually fitting.

That is what separates modern AI girlfriend voice features from the dead, flat TTS of five years ago.

🤖 Natural vs Robotic AI Voice: What Sounds Real

You can tell within the first three seconds if an AI voice is going to work or not.

Here is exactly what your brain is registering during those three seconds:

| Voice Factor | Robotic Voice | Natural Voice |

|---|---|---|

| Pauses | Perfectly timed, mechanical | Varied, breathable, human |

| Pitch | Flat or uniform | Shifts naturally with emotion |

| Cadence | Identical throughout | Speeds up and slows contextually |

| Breathing | Completely absent | Subtle but clearly present |

| Emotional tone | Preset or neutral | Context-driven and adaptive |

| Word stress | Equal weight on all syllables | Natural emphasis on key words |

The two biggest offenders in bad companion app voice quality are equal syllable stress and zero breathing.

When every word gets the same weight and there is no breath between sentences, your brain flags it instantly. You may not consciously register what is wrong. But you feel it.

Good voice AI chatbot design adds micro-pauses, breath sounds, and natural pitch shifts. These are not extras. They are the actual ingredients that push voice AI from “chatbot” to “human on the phone.” 📞

💬 How Emotional Voice AI Works in Companion Apps

This is where the best AI voice realism really shows its quality.

Emotional voice AI doesn't just read your companion's response aloud. It interprets the emotional context of the conversation and shifts the delivery to match.

You tell your AI companion you had a rough week. A basic TTS engine responds in the same bright, upbeat tone it uses for everything.

A well-built emotional layer detects the sentiment in your message and adjusts. The voice drops in pitch, slows its pace, and takes on that soft, slightly concerned warmth that makes you feel genuinely heard.

That is not magic. That is good engineering.

The emotional layer in modern text to speech AI girlfriend apps typically includes:

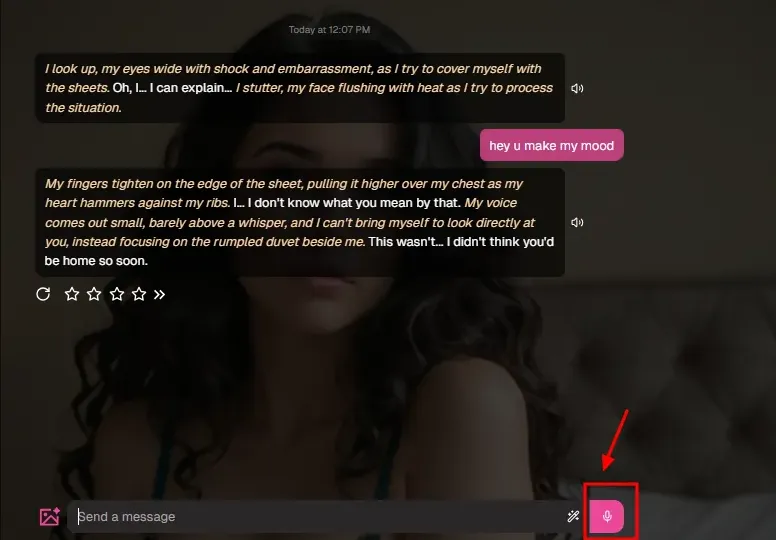

🎧 OurDream AI Voice: Real-Time Calls Tested

OurDream AI is one of the more technically serious platforms in the AI companion voice call space right now.

The platform runs real-time voice calls powered by advanced speech recognition and ultra-humanlike TTS. The updated voice library includes tones like “sleepy whisper” and “dry wit,” which is not something you will find in a generic preset voice pack.

What makes OurDream AI voice stand out:

The voice system on OurDream AI is not just static audio being played back. The system recognises the emotional direction of a conversation and adjusts. A flirtatious exchange sounds noticeably different from a late-night emotional chat.

That contextual awareness is precisely what makes the experience feel like a real AI phone call girlfriend rather than an audio player bolted onto a chat window.

Voice calls on OurDream AI are gated behind the premium plan, which starts at around $9.99 per month on the annual billing. Given that the premium tier also unlocks unlimited chats, image generation, and video tools, the voice feature alone is arguably not the main selling point. But it is genuinely good when you get to it.

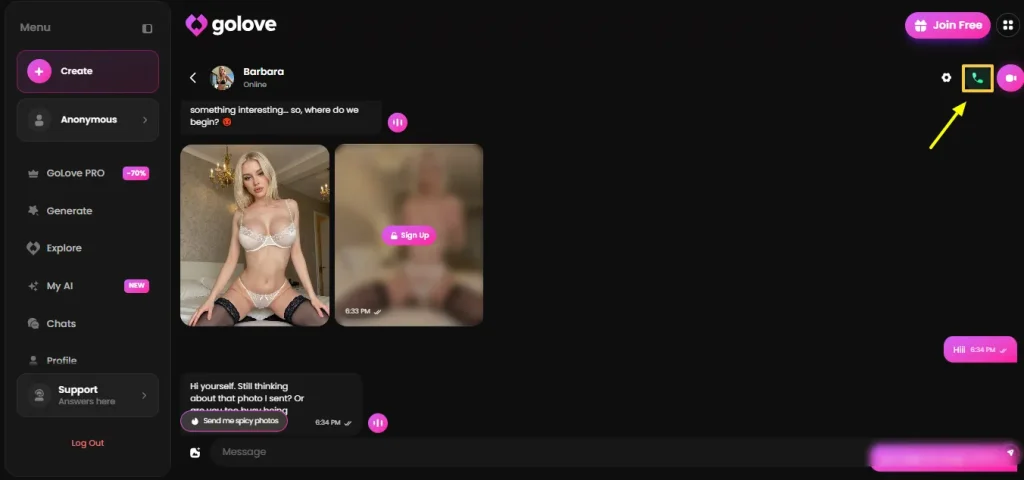

💞 GoLove AI Voice Calls: Highest Rated in 2026

GoLove AI earned a 9.3 out of 10 in independent voice call testing in 2026. That is the highest score recorded among tested AI girlfriend voice call apps this year.

That score didn't come from hype. It came from how the platform actually performs.

GoLove AI's voice setup is built for AI voice chat companion use from the ground up. It doesn't feel like a voice layer stuck onto a text app at the last minute. The integration between her personality, your chat history, and the voice model is tight.

Users consistently report that the moment they hear the voice during a call, the “AI” feeling fades. That shift in perception from chatting to talking is what good AI voice realism is supposed to create. GoLove AI delivers that reliably, which is why it keeps topping the voice-specific rankings.

🏆 Best AI Girlfriend Voice Apps Ranked for 2026

Here is a quick view of where the main platforms sit right now:

| App | Voice Realism Score | Emotional Range | Voice Cloning | Live Call Feature |

|---|---|---|---|---|

| GoLove AI | 9.3/10 | Very High | No | Yes |

| OurDream AI | 8.8/10 | Very High | Yes | Yes |

| Candy AI | 7.5/10 | Medium | No | Yes |

| GirlfriendGPT | 7.2/10 | Medium | No | Yes |

| Replika | 7.8/10 | Medium-High | No | Yes |

GoLove AI and OurDream AI are the two clear leaders for anyone who prioritises realistic AI voice and emotional range. Both have invested in voice architecture as a first-class feature, not an afterthought.

⚖️ AI Voice Cloning Ethics: What You Need to Know

AI voice cloning is the ability to replicate a specific voice using just a short audio sample. In companion apps, this shows up in two main ways.

The first is cloning a fictional character's voice to personalise your AI companion. That use case is largely fine and is what most reputable platforms intend.

The second is cloning a real person's voice without their knowledge. That is where AI voice cloning ethics become genuinely important.

Cloning an ex-partner's voice or a celebrity's voice without consent is a real problem. Responsible platforms handle this with:

OurDream AI frames its voice cloning feature as a personalisation tool, used specifically to make your AI character sound exactly how you want them to. It is not marketed as a general-purpose voice replicator. That framing matters, and it reflects a responsible implementation of genuinely powerful technology.

The technology itself is neutral. The ethics live entirely in how it is deployed and what guardrails sit around it. 🧠

🤫 Hidden Truths About AI Companion Voice Features

Time for the honest part.

Most reviews say “the voice sounds great” and call it a day. Here is the stuff they skip.

These are not reasons to avoid voice AI. They are just realistic expectations. The technology is genuinely impressive. It is just not frictionless yet.

🛠️ How to Get Better AI Companion Voice Call Quality

Getting the best out of a talking AI girlfriend or real-time voice session is partly about the platform and partly about how you approach it.

A few practical things that genuinely help:

The AI girlfriend voice feature on most platforms also improves with usage history. The more context the system has about how you communicate, the more accurately it calibrates its emotional responses. First sessions often feel slightly generic. By session five or six, the difference is noticeable.

More From OhGirlfriend

🏁 Final Thoughts: Is AI Voice Synthesis in Companion Apps Worth It?

AI voice synthesis in companion apps has moved from embarrassing to genuinely impressive in a short stretch of time.

The best platforms right now, GoLove AI and OurDream AI in particular, are delivering voice experiences that regularly catch users off guard. Not because the voice is perfect, but because it is present. It reacts. It adjusts. It feels like someone who is actually paying attention.

Is it human? No. Not quite.

Is it convincing enough that millions of users find it preferable to silence? Very much so.

If companion app voice quality matters to you, both GoLove AI and OurDream AI have clearly invested in emotional voice architecture as a core part of their product. The difference between these and platforms that tacked TTS on as a side feature is stark and obvious within the first five minutes.

The synthetic voice technology powering these experiences is improving fast. What feels remarkable in 2026 will likely feel standard by the time you read this in 2027.

That is a very good thing for anyone who uses these apps and wants their digital companion to feel genuinely present. 🎧

Lucas – your go-to wingman in the world of AI girlfriends and virtual flings. From testing voice moans and NSFW chatbots to rating roleplay realism and emotional depth, he’s tried everything so you don’t have to. Whether you’re chasing a cute cuddle bot or a full-on spicy fantasy AI, Lucas gives you the no-filter lowdown on who’s worth your time (and your late nights).

Affiliate Disclosure: This post may contain some affiliate links, which means we may receive a commission if you purchase something that we recommend at no additional cost for you (none whatsoever!)